12.4. WAL Data Filtering and Buffering

Alpha Version: Work in progress.

This section describes the data filtering and buffering mechanism within the ReorderBuffer.

The discussion begins with the multi-stage filtering process executed by the walsender, which determines which records are eligible for decoding. This is followed by a practical demonstration of how the ReorderBuffer accumulates these changes through a concrete transaction scenario.

Furthermore, it explains the differences in WAL data storage for UPDATE and DELETE statements based on the Replica Identity.

Finally, the section provides an overview of the “Spill to Disk” mechanism, which is triggered when the ReorderBuffer exceeds its memory limit.

12.4.1. Filtering

The mechanism by which the walsender decides whether to decode a WAL record and register it in the ReorderBuffer’s changes list operates as a multi-stage filtering gate.

- 1st Gate (Database OID):

Immediately discards WAL records belonging to databases other than the target database. - 2nd Gate (Origin ID / Callback):

Since version 16, the origin is checked before data enters the ReorderBuffer. If origin = none is configured, any change originating from another node (origin_id > 0) is aborted and discarded at this stage. - 3rd Gate (Publication Cache):

Since version 15, table-level filtering is also performed before buffering. Changes to tables not included in a publication, or rows excluded by Row Filters, are skipped without being accumulated in the buffer. - 4th Gate (Relation Type / Physical Structure):

The walsender also filters out WAL records related to index updates. This is because logical replication focuses on row-level data changes; once the apply worker updates a tuple on the subscriber side, the subscriber’s own indexing mechanism automatically handles the associated index updates.

Only changes that successfully pass through all these gates are stored in the ReorderBuffer.

Prior to version 15, the process was as follows:

- Check the database OID of the WAL record (version 10 or later).

- Decode the WAL record and accumulate all resulting changes in the ReorderBuffer.

- Filter changes against the publication definitions only at the time of COMMIT.

In these versions, table-level filtering was evaluated lazily. Consequently, the ReorderBuffer consumed memory unnecessarily by accumulating changes that would eventually be discarded at commit time.

12.4.2. WAL Data Buffering

To understand how the walsender reconstructs logical changes from raw WAL data, the buffering process is examined through a practical transaction interleaving scenario.

12.4.1.1. Setup: Target Relations

The scenario utilizes two tables, tbl_a and tbl_b, both configured with a Primary Key as the default REPLICA IDENTITY.

CREATE TABLE tbl_a (id int PRIMARY KEY, name text, data int);

CREATE TABLE tbl_b (id int PRIMARY KEY, name text, data int);

INSERT INTO tbl_a VALUES (1, 'Alice', 100);

INSERT INTO tbl_b VALUES (10, 'Ken', 100);12.4.1.2. Scenario: Interleaved Transaction Processing

The timeline below illustrates the generation of WAL records by two concurrent transactions, txid 840 and txid 841, and the subsequent process by which the ReorderBuffer captures these changes.

T0: BEGIN; -- txid 840

T1: INSERT INTO tbl_a VALUES(2,'Bob',200);

T2:

T3: INSERT INTO tbl_b VALUES(11,'Luke',110);

T4:

T5:

T6: DELETE FROM tbl_b WHERE id=10;

T7: COMMIT;

T8:T0: BEGIN; -- txid 841

T1:

T2: INSERT INTO tbl_a VALUES(3,'Candy',3);

T3:

T4: UPDATE tbl_a SET data=data+1 WHERE id=1;

T5: UPDATE tbl_a SET data=data+1 WHERE id=1;

T6:

T7:

T8: COMMIT;Sequence of Operations:

- T0: Both txid 840 and txid 841 are initiated.

- T1: An INSERT statement is executed on tbl_a by txid 840.

- T2: An INSERT statement is executed on tbl_a by txid 841.

- T3: An INSERT statement is executed on tbl_b by txid 840.

- T4: An UPDATE statement is executed on tbl_a by txid 841.

- T5: A second UPDATE statement is executed on tbl_a by txid 841.

- T6: A DELETE statement is executed on tbl_b by txid 840.

- T7: txid 840 is committed, triggering the ReorderBuffer to finalize the accumulated changes for this transaction.

- T8: txid 841 is committed, and its accumulated changes are subsequently processed.

It is important to note that transaction isolation levels do not need to be considered during the logical decoding process.

This is because logical decoding reconstructs committed change logs (WAL) that have already been finalized and applied to tuples, having bypassed all visibility checks mandated by their respective isolation levels during execution. While Concurrency Control is a mechanism for governing tuple visibility during transaction execution, the decoding process focuses solely on reassembling the results of those transactions.

Therefore, the original isolation level of a transaction has no impact on the decoding logic itself.

The following provides a detailed explanation of how WAL data is captured at each step of this timeline.

12.4.1.3. Detailed State Changes

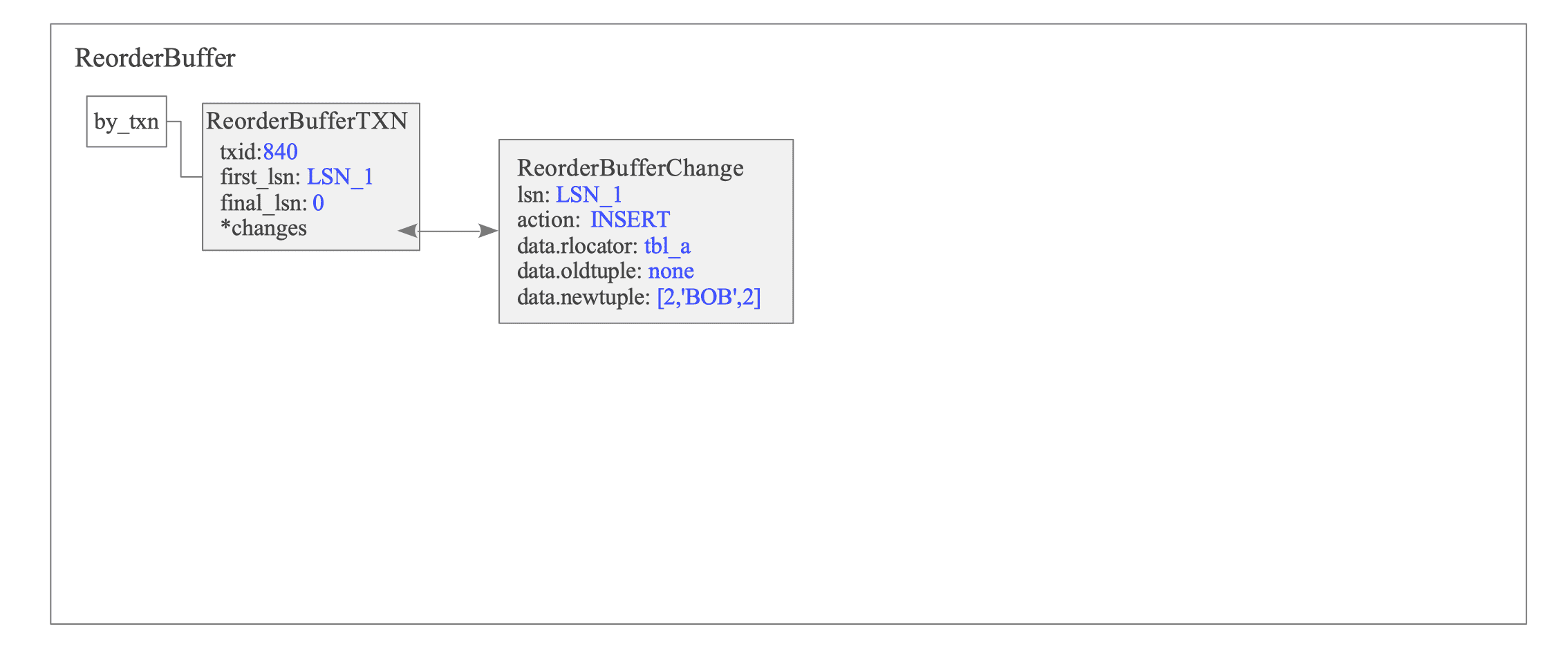

T1: INSERT statement executed on tbl_a by txid 840

A new ReorderBufferTXN struct is created by txid 840. Its first_lsn field is set to the LSN of the WAL data written by this INSERT statement, and a change record for this INSERT is accumulated to the changes list.

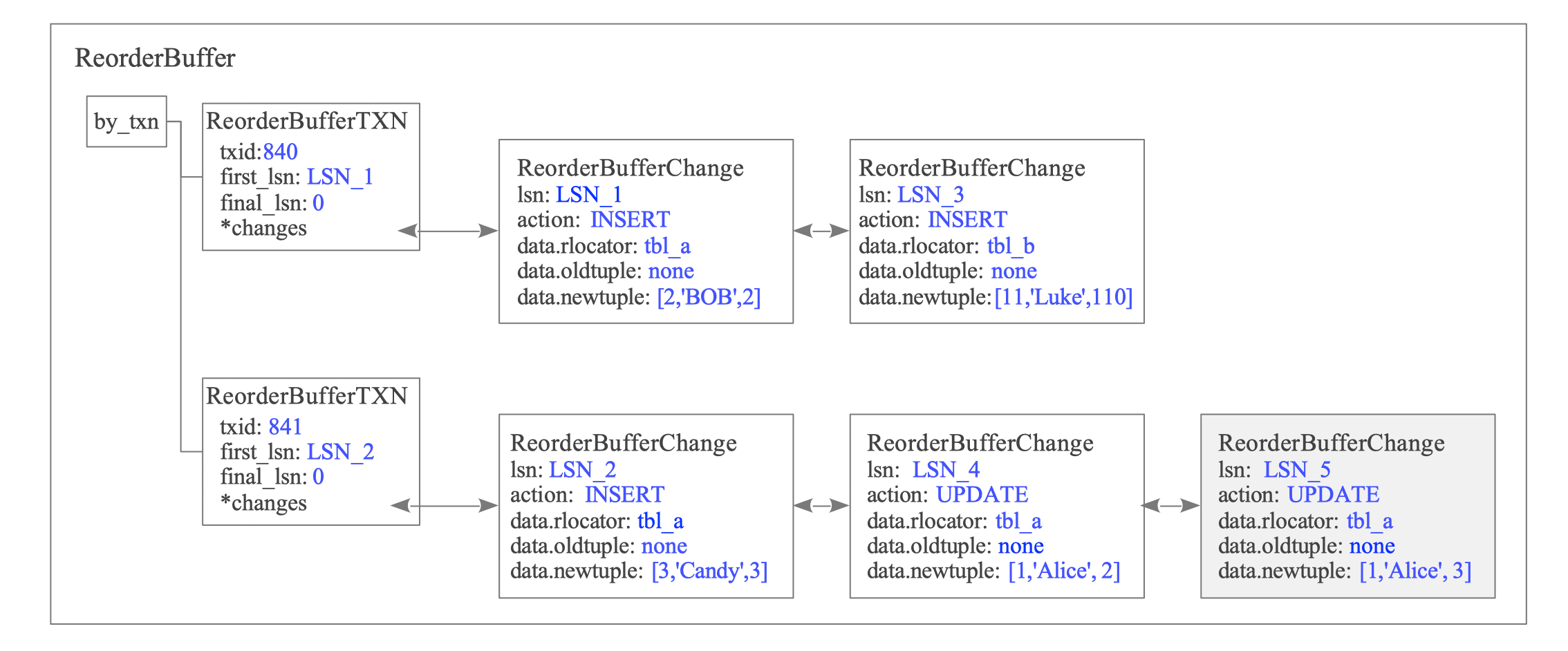

Figure 12.12. State of the ReorderBuffer after T1.

Individual changes are represented by the ReorderBufferChange structure. This structure encapsulates essential metadata, including the LSN of the associated WAL record, the specific action (in this instance, INSERT), and the target relation—uniquely identified by its tablespace, database, and relation OIDs. Additionally, the structure stores the actual tuple data introduced by the operation.

As discussed in Section 9.4.3.1, when wal_level is configured to logical, the main data portion of a Full Page Write (FPW) is augmented to include the actual modified tuple data.

This architectural enhancement allows the walsender to bypass the page block entirely during WAL retrieval. Instead of extracting tuples from the block itself, the walsender reads the tuple data directly from the main data section, significantly optimizing decoding efficiency by reducing data extraction overhead.

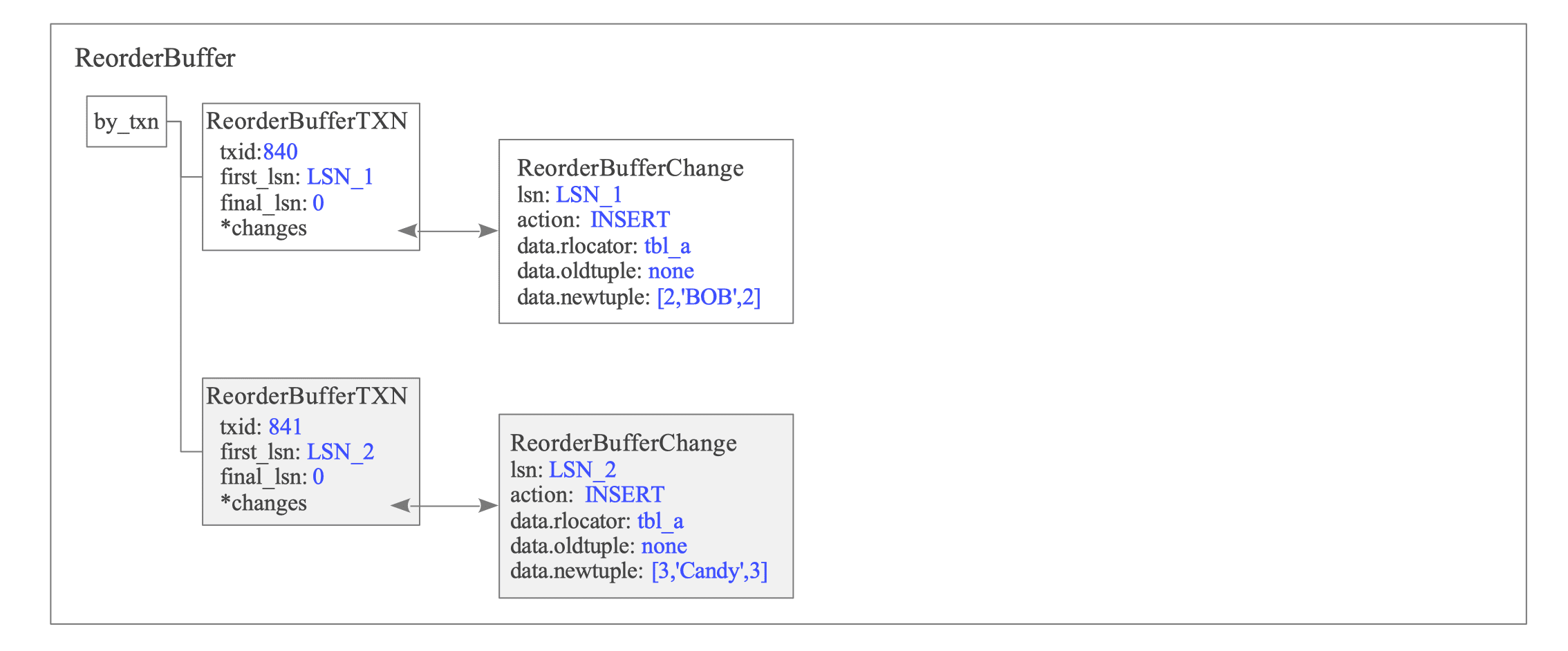

T2: INSERT statement executed on tbl_a by txid 841

A new ReorderBufferTXN structure is initialized for txid 841.

Figure 12.13. State of the ReorderBuffer after T2.

Changes for txid 840 and txid 841 are accumulated in independent sub-lists (often conceptualized as “buckets”), ensuring transactional isolation within the buffer.

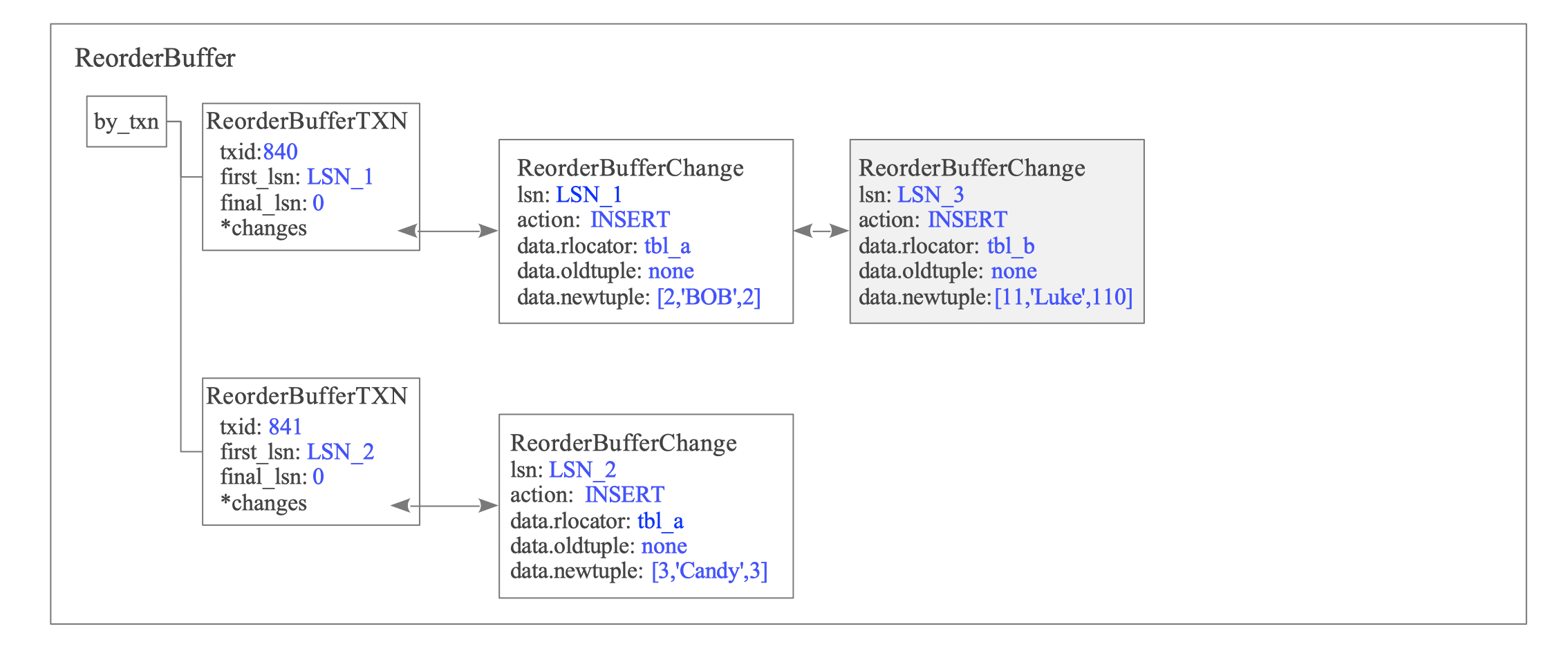

T3: INSERT statement executed on tbl_b by txid 840

The WAL data generated by the INSERT operation of txid 840 is appended to its specific changes list.

Figure 12.14. State of the ReorderBuffer after T3.

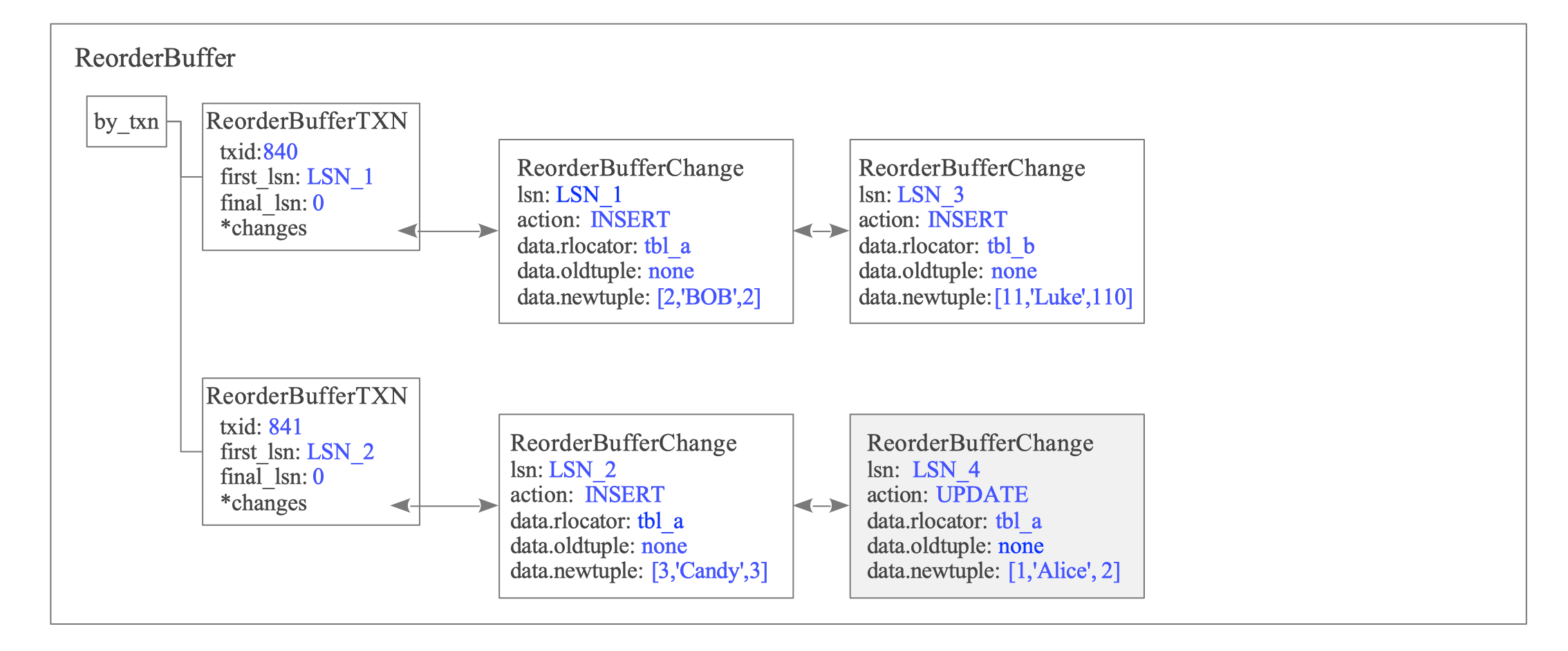

T4-T5: UPDATE statements executed on tbl_a by txid 841

txid 841 executes two sequential UPDATE statements on tbl_a.

First, the WAL data corresponding to the first UPDATE statement is appended to the changes list of txid 841.

Figure 12.15. State of the ReorderBuffer after T4.

Under the REPLICA IDENTITY DEFAULT configuration, the oldtuple is only recorded if the Primary Key columns are modified.

In this scenario, since the Primary Key (id) remains unchanged, the subscriber can uniquely identify the target tuple using the id = 1 provided in the newtuple. Consequently, the oldtuple is omitted as it is unnecessary for tuple identification.

Refer to Section 12.4.3 for further details on this behavior.

The following figure illustrates the state after the same tuple is updated again by the second UPDATE statement.

Figure 12.16. State of the ReorderBuffer after T5.

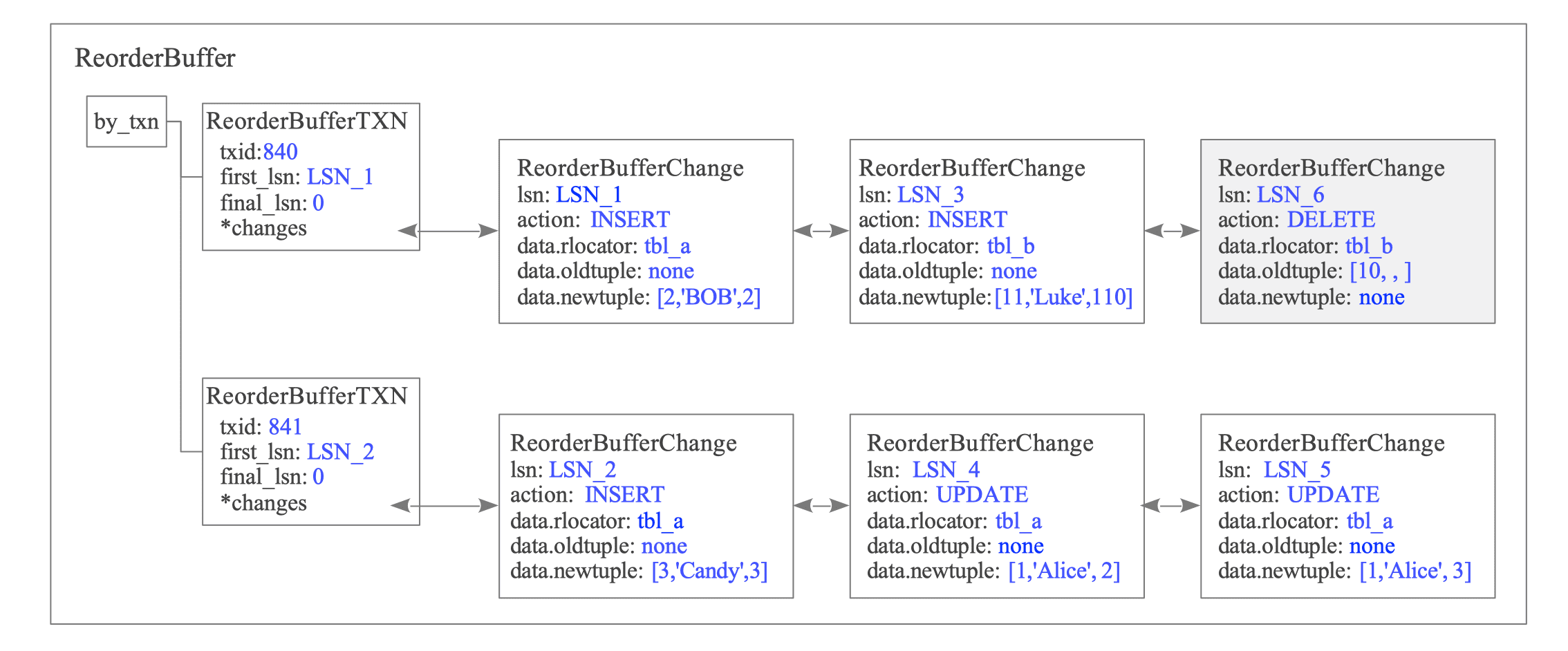

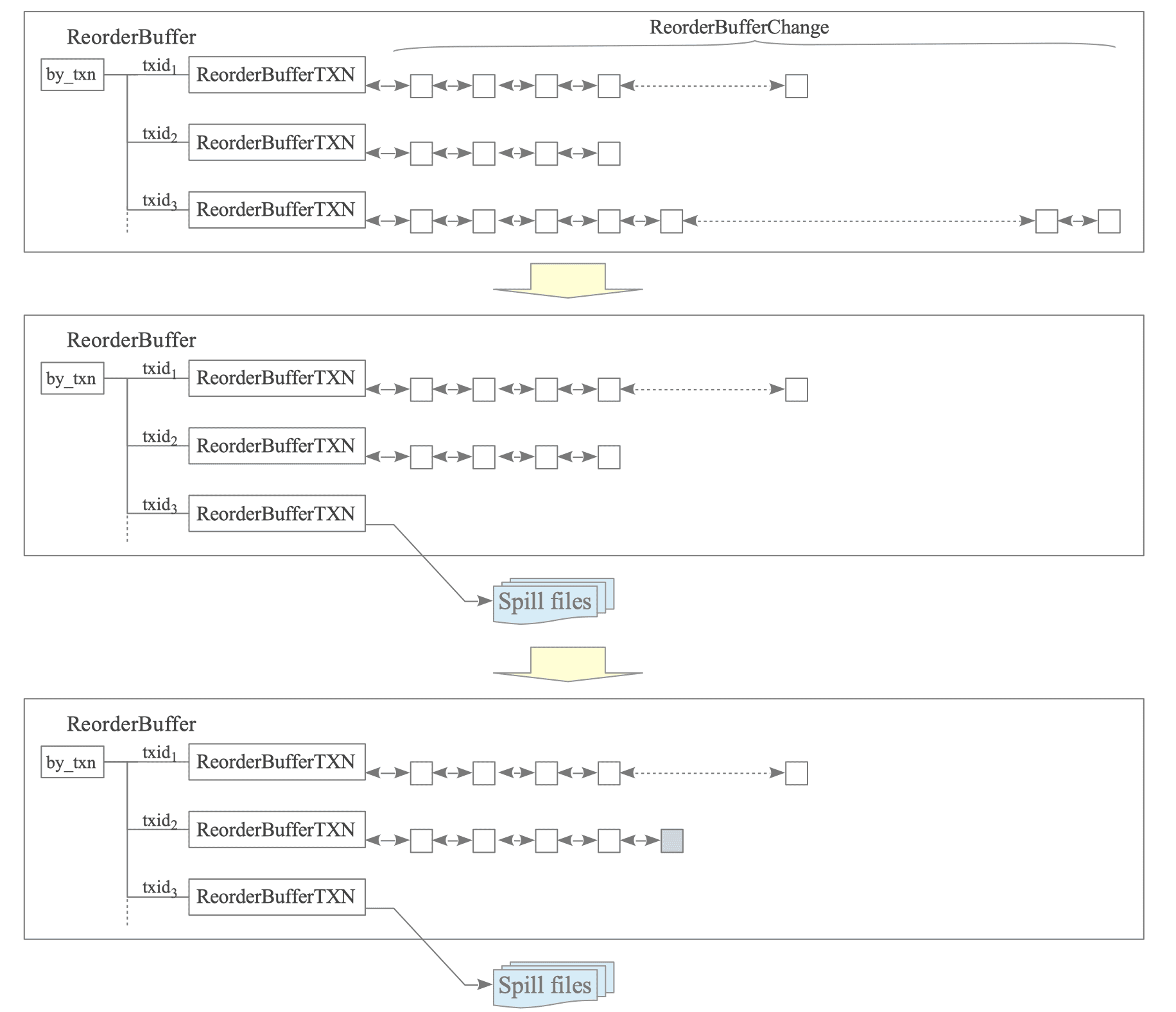

T6: DELETE statement executed on tbl_b by txid 840

When txid 840 deletes a row from tbl_b, the change record generated from the WAL data is appended to the changes list.

Figure 12.17. State of the ReorderBuffer after T6.

Only the Primary Key data, serving as the Replica Identity for the deleted tuple, is stored within this record.

Figure 12.18. Details of the DELETE change record containing only Primary Key data.

This design reflects the fact that a subscriber only requires the Key information to identify and delete the target tuple. By omitting non-key columns (such as name and data), PostgreSQL minimizes both memory consumption in the ReorderBuffer and network bandwidth during data sending.

For a detailed explanation of how oldtuple and newtuple are stored for UPDATE and DELETE commands, refer to Section 12.4.3.

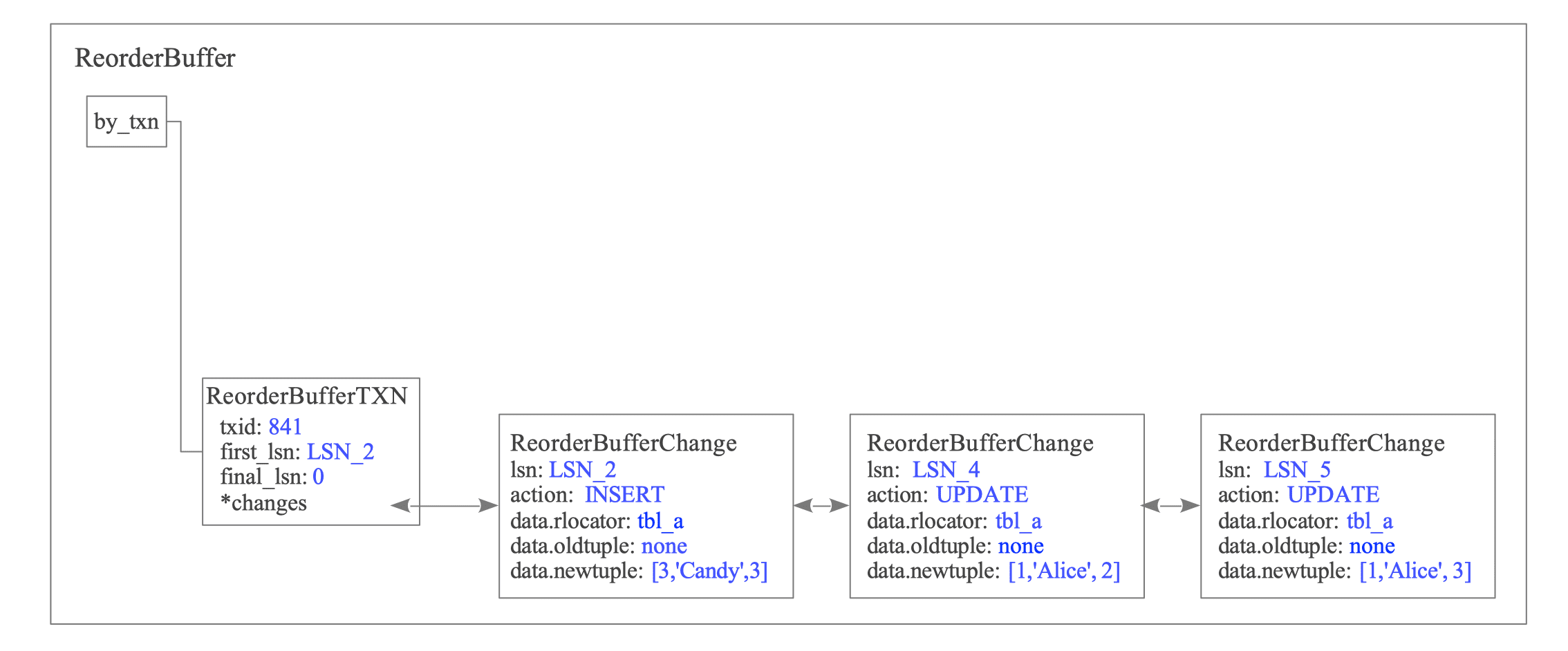

T7: COMMIT executed by txid 840

When txid 840 commits, the changes list within the corresponding ReorderBufferTXN is iterated from the head. Each change is passed to the Output Plugin, where it is formatted into a replication message and transmitted to the subscriber.

The reordering of changes and the sending message are described in Section 12.6.

Once the data sending is complete, the ReorderBufferTXN entry is removed from the ReorderBuffer.

Figure 12.19. State of the ReorderBuffer after T7.

T8: COMMIT executed by txid 841

Similarly, when txid 841 commits, its accumulated changes are reconstructed and sent to the subscriber in the same manner.

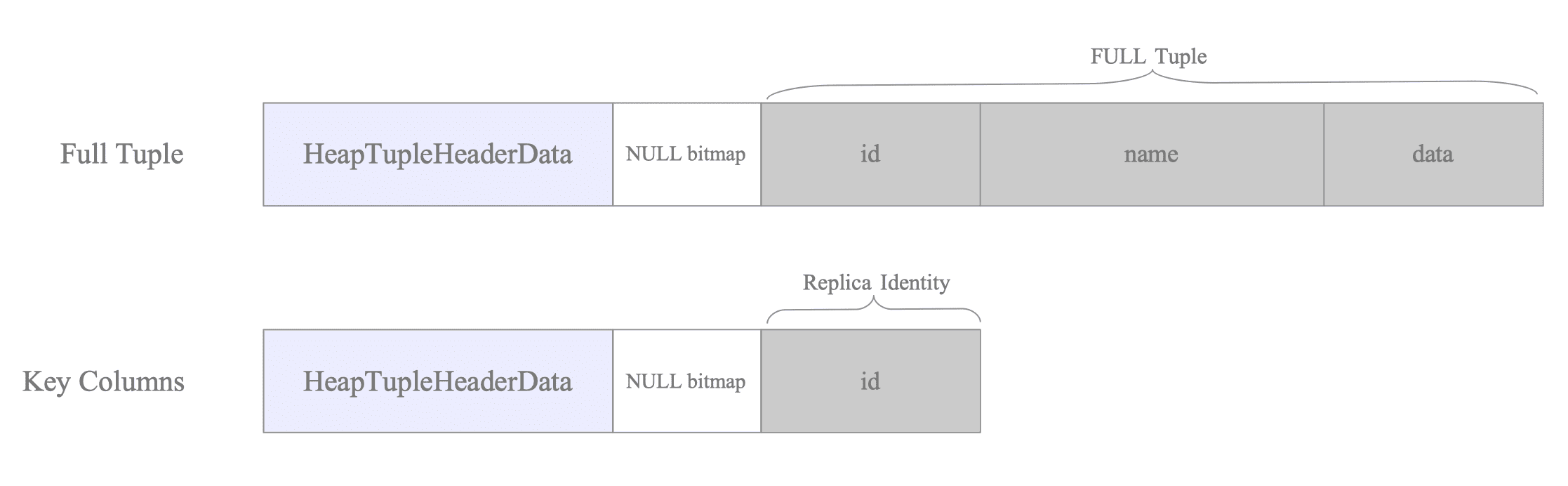

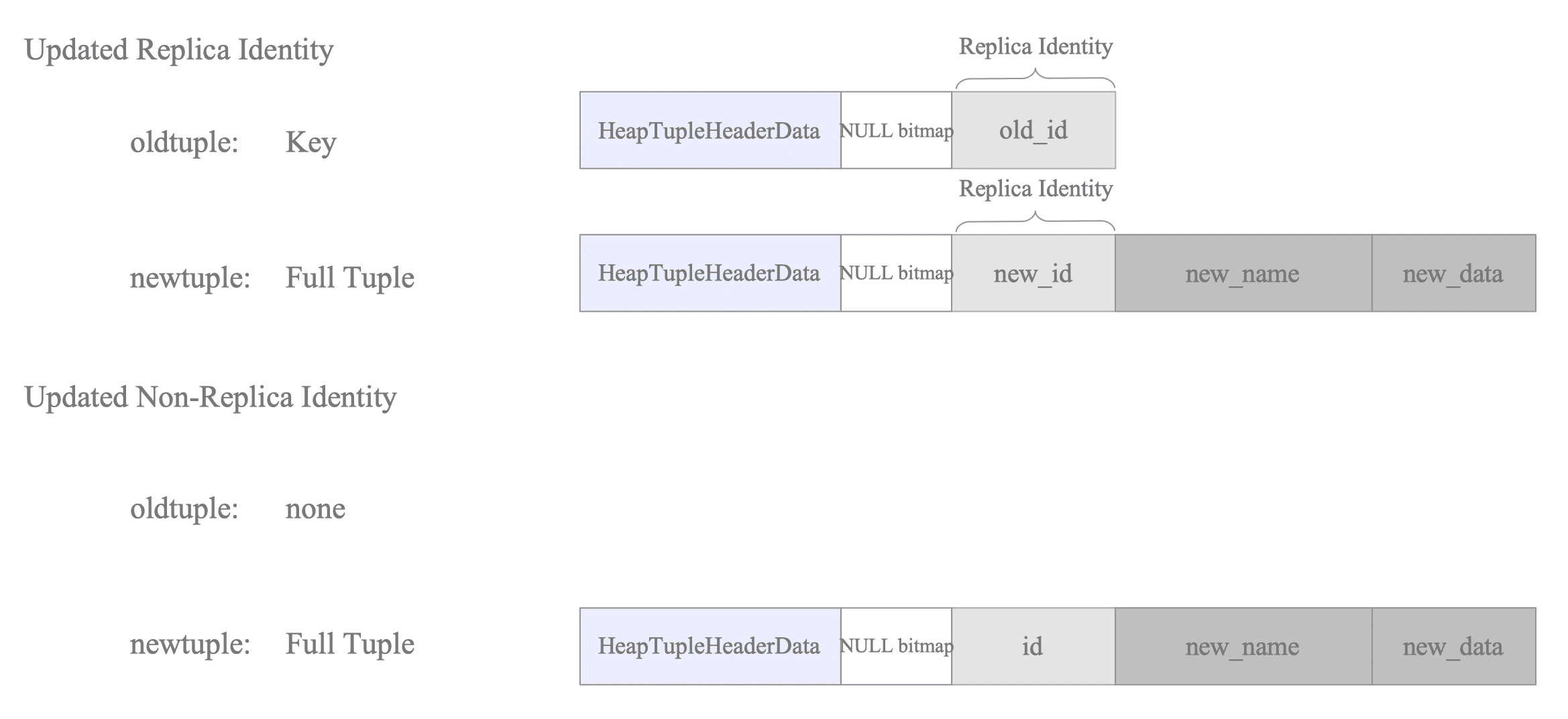

12.4.3. Relation between Replica Identity and Decoded Tuples

The data recorded in the WAL for UPDATE and DELETE operations is contingent upon the Replica Identity configured for the target table.

12.4.3.1. UPDATE Operations

The behavior of UPDATE operations differs depending on whether the Replica Identity is set to a specific key (Primary Key/Index) or to FULL.

First, consider the case where the Replica Identity is DEFAULT (PK) or USING INDEX:

The specific contents of oldtuple and newtuple vary based on which columns are being modified:

| Replica Identity | Update Type | oldtuple | newtuple |

|---|---|---|---|

| PK / Index | Non-key columns | <none> | Full New Tuple |

| PK / Index | Key columns | Key Columns | Full New Tuple |

Whether an oldtuple is recorded depends on whether the columns being updated are part of the Replica Identity. See Figure 12.20.

Figure 12.20. Payload structure for UPDATE operations under standard REPLICA IDENTITY.

When updating columns that constitute the Replica Identity, the oldtuple accumulates the previous key values. This allows the subscriber to identify the existing target tuple using these original values. This logic can be illustrated through the following pseudo-SQL:

-- Pseudo-SQL: Identifying the tuple via the old key

UPDATE tbl_a SET id = id + 100 WHERE id = 1;Conversely, if only non-identity columns are updated, the oldtuple remains empty (none). In this scenario, the subscriber identifies the target tuple using the key values already present in the newtuple.

-- Pseudo-SQL: Identifying the tuple via the current key

UPDATE tbl_a SET data = data + 100 WHERE id = 1;The Apply Worker does not assemble or execute text-based SQL queries. While it leverages the executor infrastructure, it bypasses the parsing and planning phases.

Instead, it executes direct tuple lookups—using index or sequential scans via functions such as RelationFindReplTupleByIndex—to identify and modify the target data.

When the Replica Identity is set to FULL, the oldtuple stores the entire tuple as it existed before the update, and the newtuple stores the entire updated tuple.

| Replica Identity | Update Type | oldtuple | newtuple |

|---|---|---|---|

| FULL | Any columns | Full Old Tuple | Full New Tuple |

Figure 12.21. Payload structure for UPDATE operations under REPLICA IDENTITY FULL.

This requirement stems from the fact that to uniquely identify the target tuple in a table without a formal key, the subscriber must match the values of all columns in its WHERE clause.

-- Pseudo-SQL: Full column matching required for identification

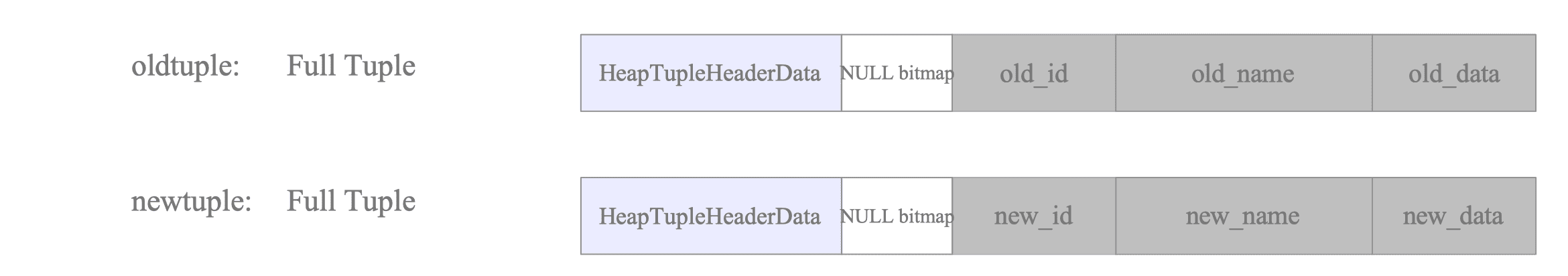

UPDATE tbl_a SET data = data + 100 WHERE id = 1 AND name = 'Alice' AND data = 100;12.4.3.2. DELETE Operations

For DELETE operations, only the oldtuple is recorded, as there is no subsequent state to report:

| Replica Identity | oldtuple | newtuple |

|---|---|---|

| PK / Index | Key Columns | <none> |

| FULL | Full Old Tuple | <none> |

When using a PK or Index identity, only the key is preserved in the oldtuple, as this information is sufficient for the subscriber to locate and remove the target tuple.

In contrast, the FULL configuration necessitates accumulating the entire oldtuple to ensure the subscriber can accurately identify the specific tuple to be deleted.

Figure 12.22. Identification of tuples for DELETE operations via oldtuple data.

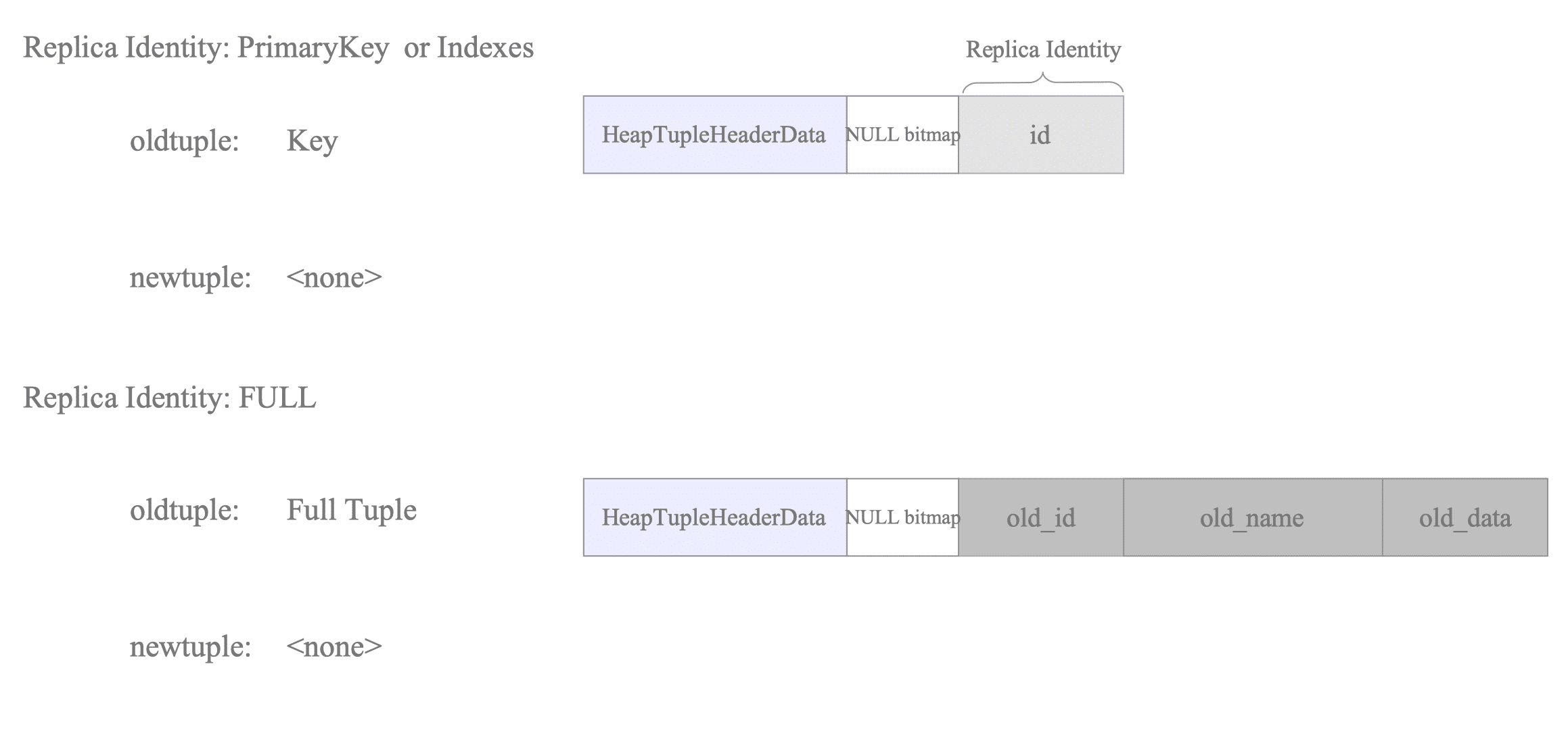

12.4.4. ReorderBuffer Memory Management and Transaction Serialization (Spill-to-Disk)

To prevent memory exhaustion when processing large transactions, the ReorderBuffer implements a spill-to-disk mechanism. This process is triggered whenever the cumulative memory consumption of all buffered transactions exceeds the threshold defined by the logical_decoding_work_mem parameter.

12.4.4.1. The Spillover Algorithm

The following steps outline how the ReorderBuffer manages its memory footprint:

- Monitor Memory Usage: For every new WAL record appended to the ReorderBuffer, the total tracked memory size is incremented by the size of the newly added ReorderBufferChange record.

- Evaluate Threshold: The current memory size is compared against the logical_decoding_work_mem limit.

- Trigger Eviction (if threshold exceeded):

- Identify the Target: The system identifies the “heaviest” transaction—the one currently accumulating the largest number of buffered changes.

- Serialize Data: All buffered changes for that specific transaction are written into a

.spillfile within the$PGDATA/pg_replslot/<slot_name>directory. - Memory Reclamation: The memory allocated for the serialized changes is released, though the transaction’s metadata (the ReorderBufferTXN structure) remains in the buffer.

- Update Transaction State: The transaction’s status is updated to serialized = true. This flag indicates that the data must be read back from disk during the final decoding phase (e.g., at commit time).

Figure 12.23 illustrates a scenario where a transaction is spilled to disk to accommodate incoming data:

Figure 12.23. Memory management in the ReorderBuffer and spilling to disk.

- [1] A new WAL record from $\text{txid}_{2}$ is being appended, but the ReorderBuffer has already reached its capacity.

- [2] The system identifies $\text{txid}_{3}$ as the transaction with the most accumulated changes. Its changes are serialized to a spill file, and the associated memory is released.

- [3] The changes for $\text{txid}_{2}$ are successfully added to the ReorderBuffer using the newly available space.

PostgreSQL prioritizes spilling the “heaviest” transaction rather than flushing all transactions simultaneously. This approach minimizes disk I/O while effectively keeping memory usage within the allowed limits.

12.4.4.2. Spill File Structure and Naming Conventions

Spill files are stored within the replication slot directory:

$PGDATA/pg_replslot/<slot_name>/

└── xid-856-lsn-0-6000000.spill

└── xid-856-lsn-0-7000000.spill

└── xid-856-lsn-0-8000000.spillThe naming convention for these files is designed to allow unique identification by transaction ID and LSN range:

Format: xid-[XID]-lsn-[LSN_HIGH]-[LSN_LOW].spill

- XID: The transaction ID (represented in decimal).

- LSN_HIGH: The upper 32 bits of the LSN (represented in hexadecimal).

- LSN_LOW: The lower 32 bits of the LSN (represented in hexadecimal).

Practical Example:

$ ls -l -h $PGDATA/pg_replslot/myslot/

total 68M

-rw------- 1 postgres postgres 200 Mar 24 08:12 state

-rw------- 1 postgres postgres 30M Mar 24 08:12 xid-856-lsn-0-6000000.spill

-rw------- 1 postgres postgres 34M Mar 24 08:12 xid-856-lsn-0-7000000.spill

-rw------- 1 postgres postgres 4.5M Mar 24 08:12 xid-856-lsn-0-8000000.spill